Proof-of-Concept of Integrated Setup: Monitoring, Scheduling and Kubernetes/KubeEdge

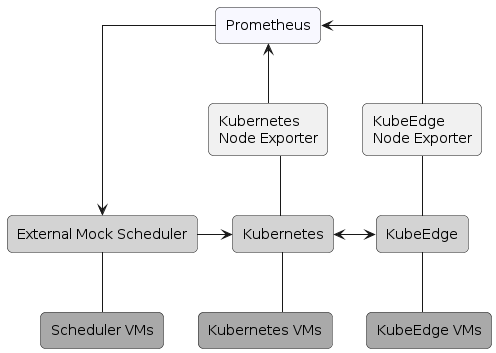

This proof-of-concept setup provides a working example of the integration of an external scheduler with Kubernetes and Prometheus. This setup demonstrates the interaction between Prometheus and the scheduler, as well as between the scheduler and Kubernetes. In DECICE, the AI Scheduler generates scheduling decisions based on the state of the Dynamic Digital Twin, and the DECICE API is responsible for the interactions between Prometheus and Kubernetes. Therefore, this setup is beneficial to obtain early experience and experimentation.

The setup

The Prometheus Stack was installed via Helm charts [1] on the clusters, and includes the following components:

- Grafana: a web-UI interface for displaying Prometheus metrics.

- Node Exporters: an interface on the cluster nodes to retrieve the system metrics.

- Prometheus Kubernetes Operator [2]: provides simplified configuration and management of Prometheus, specifically in Kubernetes

Prometheus Node Exporters are running on the nodes of the clusters, exposing host system metrics. The Prometheus server is running on the Kubernetes cluster and collecting the metrics. The External Mock Scheduler queries the metrics from the Prometheus server, makes a scheduling decision based on them and propagates the decision to Kubernetes.

Scheduler

The External Mock Scheduler is written in Python and requires Kubernetes and Prometheus API clients to be installed. The Kubernetes community provides the official Python client [3]. In contrast, for Prometheus, we chose prometheus-api-client-python [4], as it provides a more straightforward API than the official Prometheus client, especially for sending custom queries to obtain metrics. The Kubernetes configuration file and the URL of Prometheus API are set via environment variables and retrieved by the Scheduler during the runtime.

We started the development following this material [5] and extended it to create a scheduling decision based on the Prometheus metrics. In our case, we look at the CPU utilisation of the nodes; however, other metrics could also be used. In order not to interfere with the default Kubernetes scheduler, we explicitly specify the scheduler name of the External Mock Scheduler in the pod manifests, such that the External Mock Scheduler will be responsible for scheduling only these pods and not all the pods of the clusters. The overall workflow for the External Mock Scheduler is outlined below.

The Scheduler watches for the pod events of a particular namespace. The Scheduler proceeds, if an event is relevant, i.e. the pod status is “Pending”, it is not currently being initialised and the scheduler name field (spec.schedulerName) in the pod manifest matches the External Mock Scheduler name [6].

The Scheduler then retrieves a list of healthy nodes of the Kubernetes cluster by using Kubernetes API and queries Prometheus API to retrieve the CPU usage of the available nodes. It selects a node with the least CPU utilisation and creates a scheduling decision via a Binding [7] between the pod and the selected node. This scheduling decision is then propagated to Kubernetes API, and the pod is eventually running on the selected node. Additionally, the Scheduler observes the subsequent events of the pod initialisation, such as container creation and changing the status to “Running”.

It should be noted that the Binding interface is deprecated since version v1.7 of Kubernetes, and other scheduling options should be considered in the future.

Demonstration

To showcase the above-mentioned workflow of the External Mock Scheduler, we deploy a pod (nginx) with the following specification (note that the scheduler name is the one used to distinguish the External Mock Scheduler):

apiVersion: v1

kind: Pod

metadata:

namespace: decice

name: nginx

spec:

schedulerName: decice_sched

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

When we check the information about this pod, we will see that the status of the pod is Pending and the node is unassigned:

$ kubectl -n decice get pod nginx

NAME READY STATUS RESTARTS AGE

nginx 0/1 Pending 0 8s

$ kubectl -n decice describe pod nginx | grep Node:

Node: <none>

When we run the Scheduler, the pod events will be streamed and the nginx pod will be suitable for scheduling, as it is “Pending” and no prior events (e.g. pod initialisation) were associated with it. The Scheduler then sends requests to Kubernetes API to obtain the list of available nodes, queries Prometheus API to retrieve CPU usage metrics and selects a node with the least CPU usage. It then schedules the pod into the selected node, as shown here:

$ kubectl -n decice get pod nginx

NAME READY STATUS RESTARTS AGE

nginx 1/1 Running 0 14s

$ kubectl -n decice describe pod nginx | grep Node:

Node: ke-worker1/10.254.1.16

In this proof-of-concept setup, we achieved the integration between an external scheduler, Kubernetes, and Prometheus. We provided an early experiment that will be used later to develop and integrate the DECICE framework.

Author: Kamil Tokmakov (HLRS)

Reference (Books, Online, etc)

[1] Prometheus Stack, Available at: https://github.com/prometheus-community/helm-charts/tree/main/charts/kube-prometheus-stack, accessed on 27.09.2023

[2] Prometheus Kubernetes Operator, Available at: https://github.com/prometheus-operator/prometheus-operator, accessed on 27.09.2023

[3] Kubernetes Python client, Available at: https://github.com/kubernetes-client/python, accessed on 27.09.2023

[4] Prometheus API Python client, Available at: https://github.com/4n4nd/prometheus-api-client-python, accessed on 27.09.2023

[5] Sebastien Goasguen, Kubernetes Scheduling in Python, Available at: https://sebgoa.medium.com/kubernetes-scheduling-in-python-3588f4928b13, accessed on 27.09.2023

[6] Kubernetes Documentation: Configure Multiple Schedulers, Available at: https://kubernetes.io/docs/tasks/extend-kubernetes/configure-multiple-schedulers/, accessed on 27.09.2023

[7] Kubernetes Documentation, Kubernetes API: Binding, Available at: https://kubernetes.io/docs/reference/kubernetes-api/cluster-resources/binding-v1/, accessed on 27.09.2023

Links

Keywords

HLRS, DECICE, Kubernetes, KubeEdge, Prometheus, Monitoring, Integration